26 Presenting the Data

Chapter 19 introduced the importance of partitioning sources of variability in the response. While our initial discussion of partitioning variability was focused on conducting computing a p-value, it permeates all aspects of an analysis. In this chapter, we see how this partitioning is behind the graphical and numerical summaries we construct to address our research objective.

We have already argued that variability makes addressing questions difficult. If every subject in the Organic Food Case Study had the same moral expectation when exposed to the same food type, there would be no need for statistics. We would simply evaluate one subject under each food type and have our conclusion. The discipline of Statistics exists because of the ambiguity created by variability in the responses. As a result of this variability, our statistical graphics (and later our model for the data-generating process) must distinguish the various sources of variability. That is, with any analysis, we try to answer the question “why aren’t all the values the same? What are the reasons for the differences we are observing?”

From the Organic Food Case Study, consider the primary question of interest:

Is there evidence of a relationship between the type of food a person is exposed to and their average moral expectations, following exposure?

We are really asking “does the food exposure help explain the differences in the moral expectations of individuals?” We know that there are differences in moral expectations between individuals. But, are these differences solely due to natural variability (some people are just inherently, possibly due to how they were raised, more or less strict in terms of their moral beliefs); or, is there some systematic component that explains at least a portion of the differences between individuals?

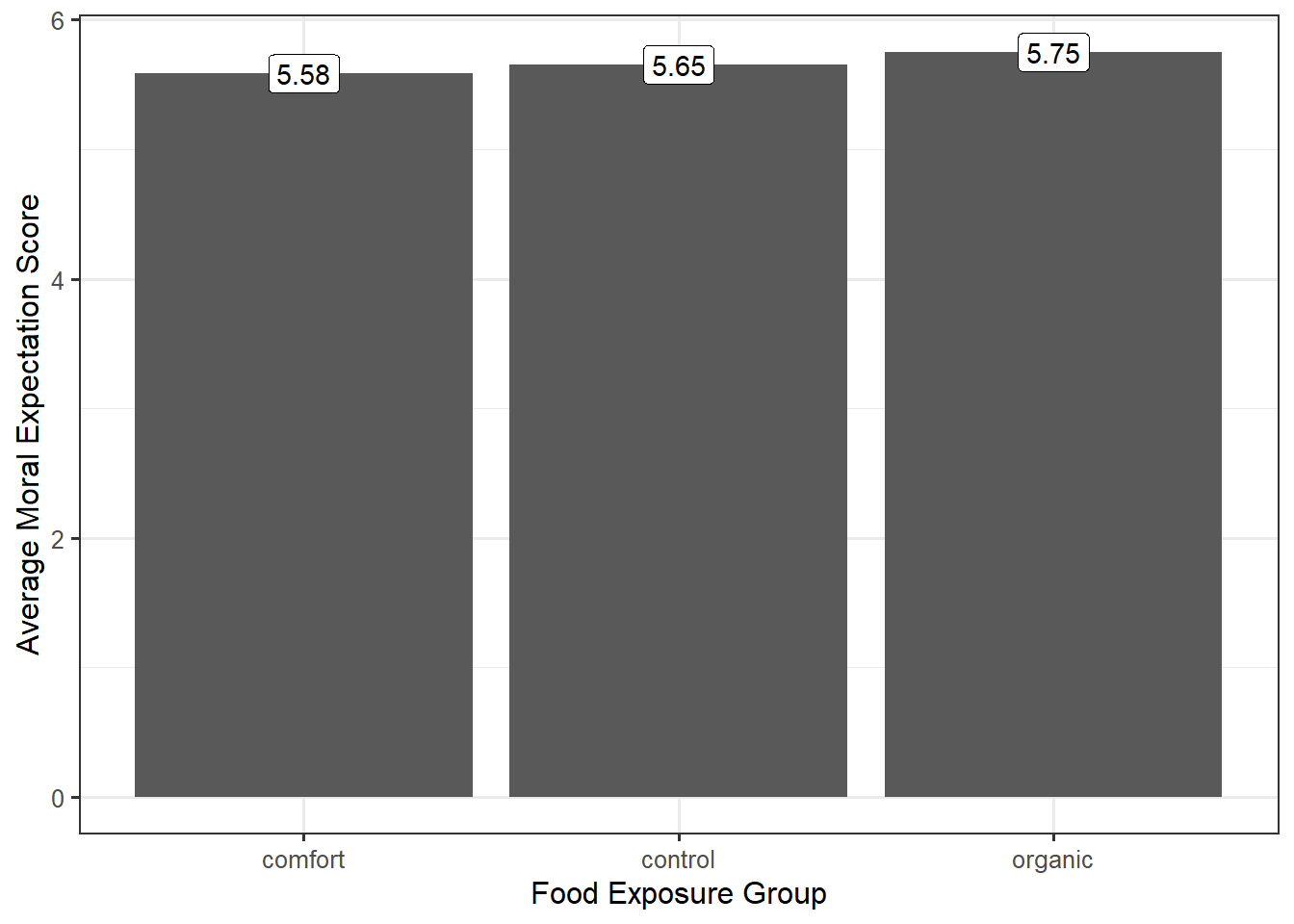

A good graphic must then tease out how much of the difference in the moral expectations is from subject-to-subject variability and how much is due to the food exposure. When our response and predictor were both quantitative, a scatterplot was appropriate (Chapter 16). When we are examining the relationship between a quantitative response and a factor (categorical predictor), a scatterplot may no longer be the best way to visualize the data; however, the goal remains the same — address the research objective and tease out the various sources of variability in the response. Consider a common graphic that is not useful in this situation (Figure 26.1).

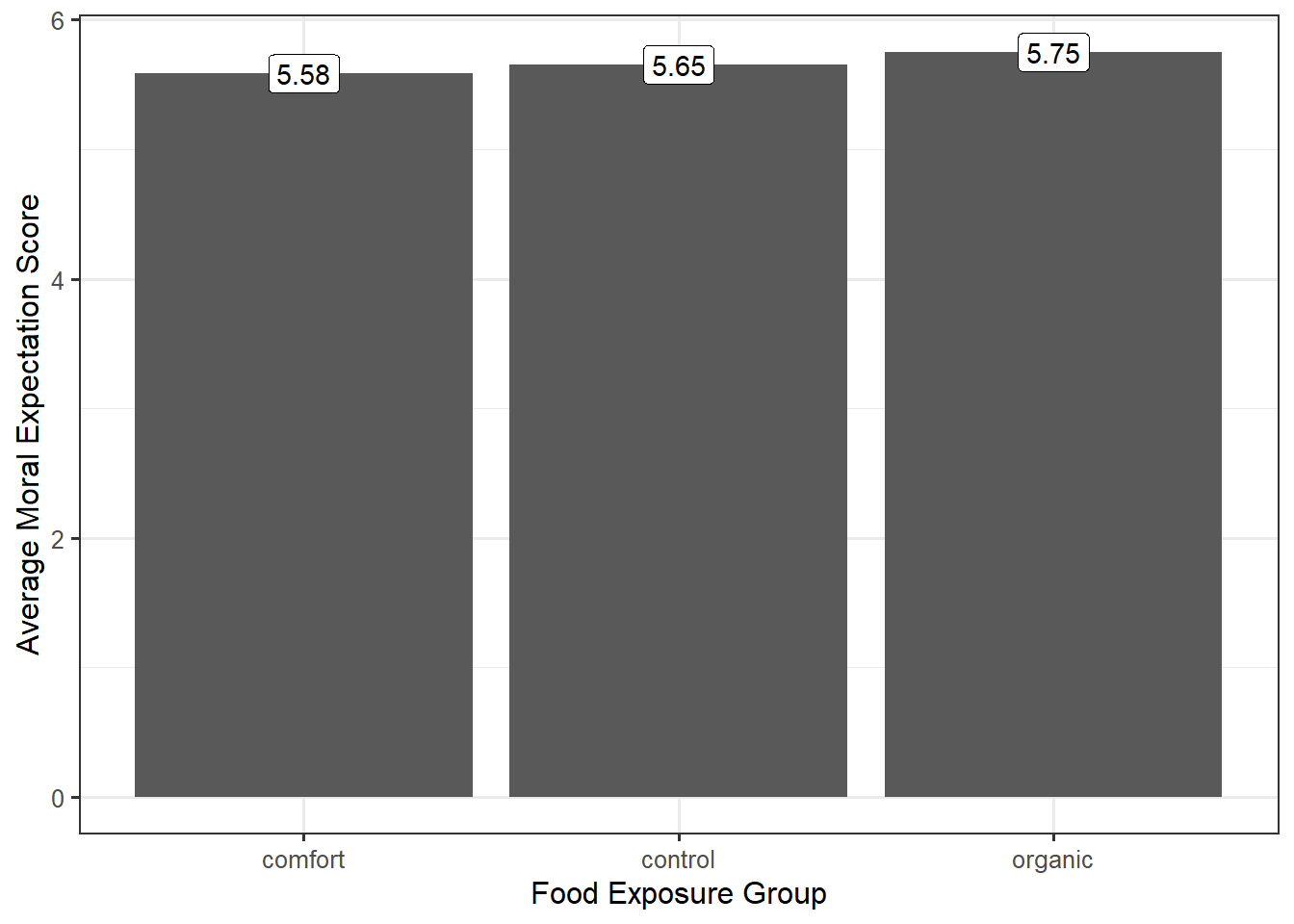

Figure 26.1 does a good job of indicating the variability between the groups (different groups have potentially different average responses). However, recall that every signal should be assessed against the noise. Our graphic needs to not only compare the differences between the groups but also allow the viewer to get a sense of the variability within the group. A common way of doing this within engineering and scientific disciplines is to construct side-by-side boxplots, as illustrated in Figure 26.2.

From the graphic, we see that the moral expectation scores seem to have nearly the same pattern in each of the exposure groups. More, the center of each of the groups is roughly the same. That is, there does not appear to be any evidence that the type of food to which a subject is exposed is associated with average moral expectation score.

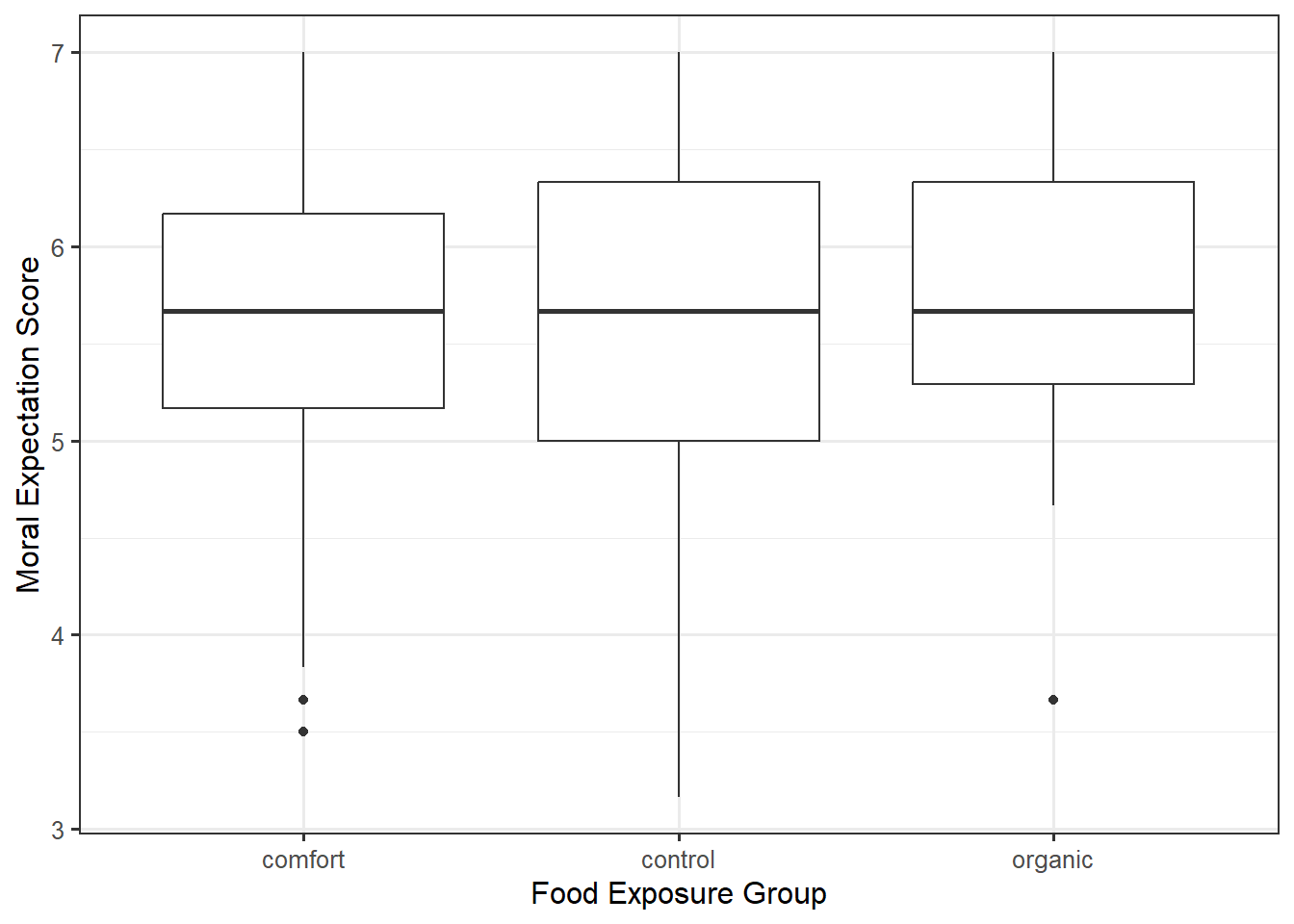

Side-by-side boxplots can be helpful in comparing large samples as they summarize the location and spread of the data. When the sample is smaller, it can be helpful to overlay the raw data on the graphic in addition to the summary provided by the boxplot. We might also consider adding additional information, like the mean within each group. An alternative to boxplots is to use violin plots, which emphasize the shape of the distribution instead of summarizing it like boxplots; again, this is most helpful when the sample size is large. Yet another option is to construct a density plot for each group and overlay them on the same plot; again, this is most appropriate for larger samples. Overlaying densities on the same graphic becomes more challenging as the number of groups grows; in these cases, side-by-side graphics tend to be more effective. A comparison of these approaches is in Figure 26.3.

If you have smaller than 10 observations in a group, it is more appropriate to use an individual value plot (equivalent to a scatterplot when one axis is a categorical variable) or a jitter plot. Having the raw data is important in these cases.

Each of the plots in Figure 26.3 is reasonable. What makes them useful in addressing the research question is that in each plot, we can compare the degree to which the groups differ relative to the variability within a group. That is, we partition the variability. With each plot, we can say that one of the reasons the groups differ is because of exposure to different food types; however, this difference is extremely small relative to the fact that regardless of which food group a participant was exposed to, the variability in moral expectations with that group is quite large. Since the predominant variability in the moral exposure is the variability within the groups, we would say there is no signal here. That is, the graphics do not indicate there is any evidence that the average moral expectation scores differ across food exposure groups.

The key to a good summary is understanding the question of interest and building a graphic that addresses the question through a useful characterization of the variability.